Griffin: New LLM Architecture Conquer Long Contexts

Recurrent neural networks (RNNs) have fast inference and scale efficiently on long sequences, but they are difficult to train and hard to scale.

When it comes to efficient and powerful language models, modeling long contexts and sequences effectively and cutting cost remain significant challenges. Google DeepMind's innovative Hawk and Griffin models are taking strides in this direction, showing remarkable abilities to leverage extended context windows and presents compelling alternative to traditional Transformer-based approaches.

Introducing Griffin and Hawk

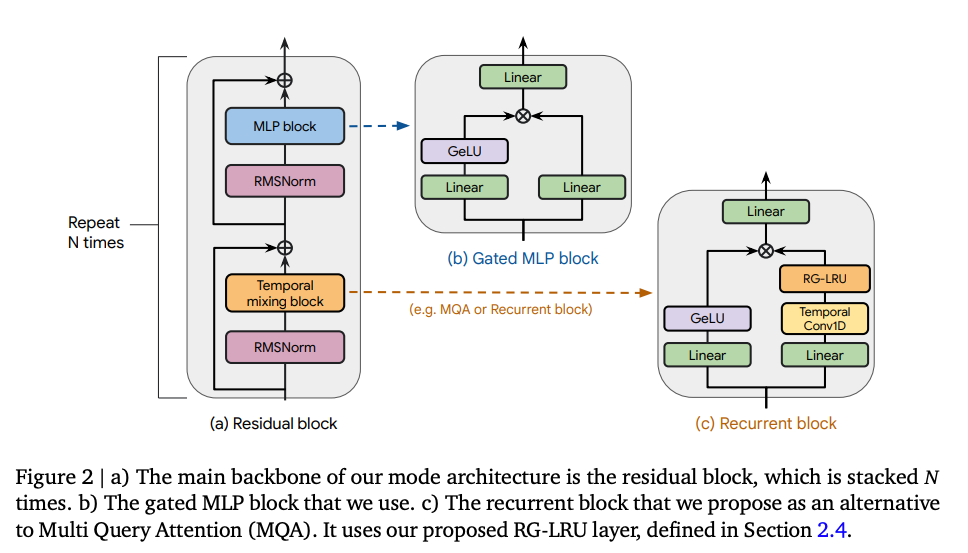

DeepMind proposes Hawk, an RNN with a recurrent architecture called the Real-Gated Linear Recurrent Unit (RG-LRU) to enhance the model’s performance on downstream tasks. Hawk surpasses the reported performance of Mamba, another model, on these tasks.

Meanwhile, Griffin is a hybrid model that combines the use of Linear Recurrent Unit with local attention to improve performance on downstream tasks. It achieves comparable performance to Llama-2, despite being trained on significantly fewer tokens. Griffin addresses the challenges of training and scaling recurrent neural networks (RNNs) and demonstrates competitive results with reduced computational requirements.

Gated Linear Recurrences

Gated linear recurrences are a variation of RNNs that incorporate gating mechanisms to control the flow of information through the network. They play a crucial role in these models. These recurrences allow the model to efficiently process long sequences by selectively updating and resetting information at each time step.

The gating mechanism helps in capturing long-term dependencies and maintaining relevant information over time. By incorporating gated linear recurrences, it overcomes the difficulties associated with training and scaling RNNs, making it a powerful language model architecture.

Local Attention

In addition to gated linear recurrences, Griffin utilizes local attention to further enhance its efficiency. Attention mechanisms have played a crucial role in improving the performance of language models by allowing them to focus on relevant parts of the input sequence during processing.

Traditional attention mechanisms, such as Multi-Query Attention (MQA), have been widely used in various models.However, these traditional attention mechanisms come with a significant computational cost. As the input sequence length increases, the computational complexity of attending to all positions in the sequence grows quadratically. This can make it challenging to apply attention mechanisms to long sequences efficiently.

To address this issue, researchers have introduced the concept of local attention. Local attention mechanisms limit the range of positions that a model attends to, focusing only on a subset of positions within a fixed window. By doing so, the computational cost of attending to the entire sequence is significantly reduced, making it more scalable and efficient. By incorporating local attention, Griffin is able to achieve high performance on downstream tasks while maintaining efficiency in terms of speed and memory usage.

Benefits-Efficiency Breakthroughs for Scalable Language AI

The benefits of Griffin are evident in its performance on downstream tasks. Hawk, with gated linear recurrences, surpasses the reported performance of Mamba on these tasks. Griffin matches the performance of Llama-2, despite being trained on over 6 times fewer tokens. This demonstrates the effectiveness of these models in achieving comparable performance to state-of-the-art models while being more efficient in terms of training data requirements and computational resources.

A key design goal for both Hawk and Griffin was to overcome the computational challenges faced by advanced neural networks during training and inference. These models achieve substantially faster throughput and reduced latency compared to transformers, making them highly attractive for real-time applications and services.

Keep reading with a 7-day free trial

Subscribe to The MLnotes Newsletter to keep reading this post and get 7 days of free access to the full post archives.